|

I think it is safe to say that a legitimate Screaming Frog would not be asking for, say, /admin/ on a site that does not have such a file (and would certainly not have external links to it in any case). Unlike the once-popular fake Googlebot, which is easily flagged by IP, they might find SF authorized by name, so that's a reason for claiming to be SF. Why bother to ask for robots.txt if they have already decided to ignore it? Are “ask” and “honor” separate settings? all but one of them, including several that definitely encountered a Disallow

SF robots.txt requests followed by request for one page: 15, i.e.

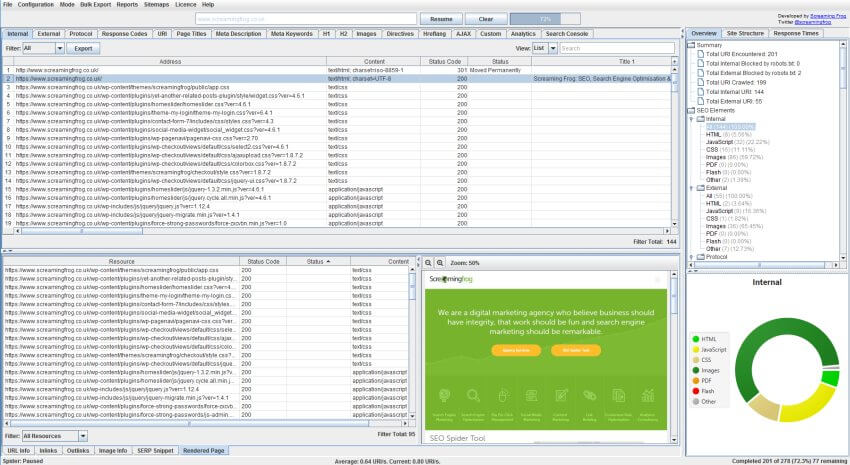

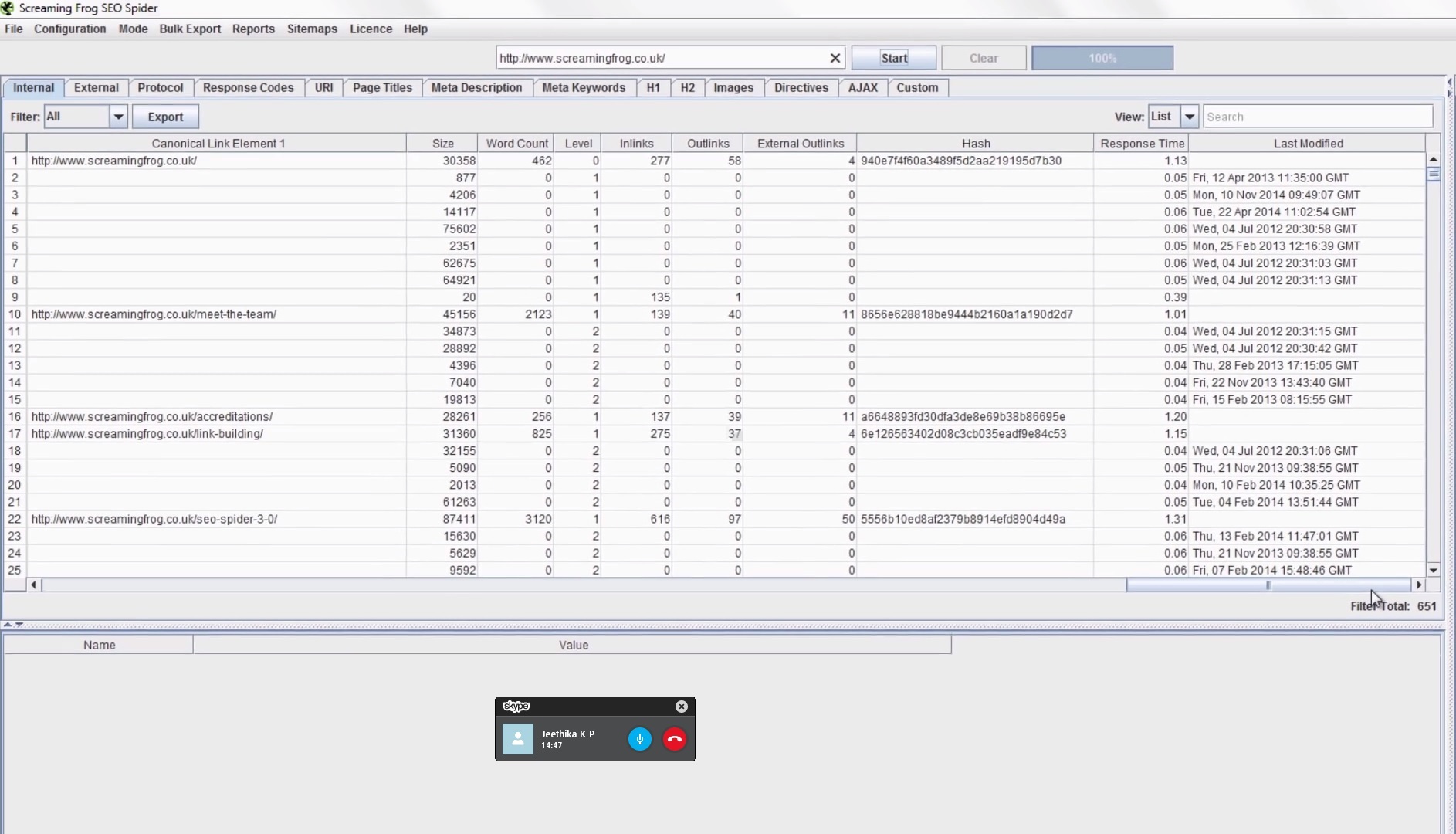

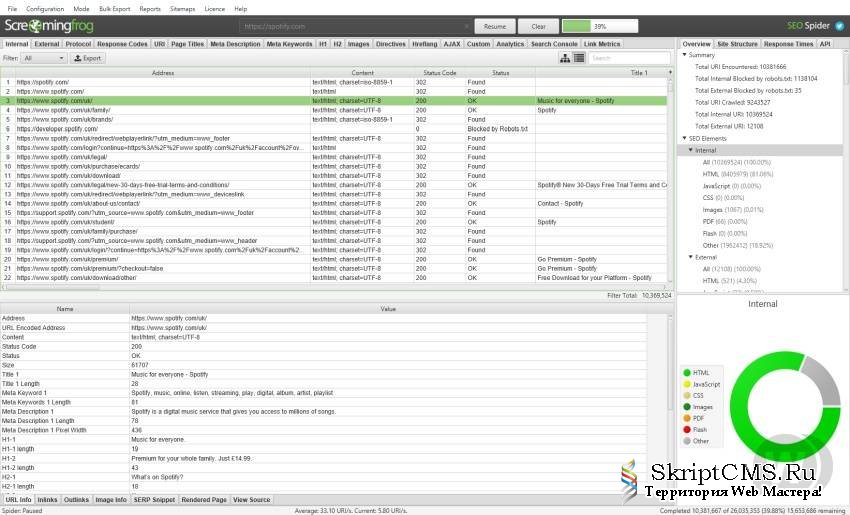

It provides a user-friendly interface to a powerful site crawler and. This will open up a number of sitemap configuration options. 2) Click ‘Sitemaps > XML Sitemap’ When the crawl has reached 100 and finished, click the ‘XML Sitemap’ option under ‘Sitemaps’ in the top level menu. SF requests for robots.txt: 16, or about 1/8 of all requests (a few of them in current non-archived logs, so I don't know how I overlooked them before) The Screaming Frog SEO Spider Tool is widely used in digital marketing and ecommerce. Open up the SEO Spider, type or copy in the website you wish to crawl in the ‘enter url to spider’ box and hit ‘Start’. SEO Spider is considered to be one of the best on-page SEO auditing tools available. SEO helps you improve on-page SEO by crawling and auditing your site for common SEO issues. It understands how search engines work and has a ton of powerful features to help you rank higher. In their case actually the second step, because that “sometime in 2019” is when I put them on a separate line after the more concise shared-block didn't work. The most common SEO Spider tool is by Screaming Frog. Screaming Frog has been disallowed in robots.txt since some time in 2019, as the first step in deciding whether to poke a hole. Screamingfrogseospider -config "/home/basvanbeek/scream/config.:: deeper delve into archived logs, followed by look at robots.txt and header access file, which I forgot to do earlier :: The Screaming Frog SEO Spider can be downloaded by clicking on the appropriate download button for your operating system and then running the installer. Now you can run the command line like this: Screaming Frog 2021 Complete Guide (SEO Spider and Website Crawler) Chase Reiner. Will be usedĪs the crawl name when in DB storage modeĭb Storage Mode option sets project name of crawl. Option to name this invocation of the SEO Spider. Supply a config file for the spider to use Start crawling the specified URLs in list mode Screamingfrogseospider screamingfrogseospider -help These are just a few of the many great SEO tools available.

Sudo dpkg -i screamingfrogseospider_13.2_all.deb Screaming Frog SEO Spider Screaming Frog is a great tool for improving your websites crawlability and indexability. Sudo apt-get install cabextract xfonts-utils Sudo apt-get install xdg-utils zenity libgconf-2-4 fonts-wqy-zenhei The Screaming Frog Log File Analyser is an SEO auditing tool, built by real SEOs with thousands of users worldwide. The SEO Spider tool from Screaming Frog is a free application that lets you crawl your site like a search engine and find any trouble spots you can correct. If you happen to get the hang of using it. Sudo apt install ttf-mscorefonts-installer The Screaming Frog SEO Spider is a desktop app used to crawl website links, apps, images, and CSS with SEO in mind.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed